Investigating Asynchronous Audio and Video in MPEG-DASH Stream

A step-by-step guide for determining, testing and exploring asynchronous audio and video in MPEG-DASH stream.

Introduction

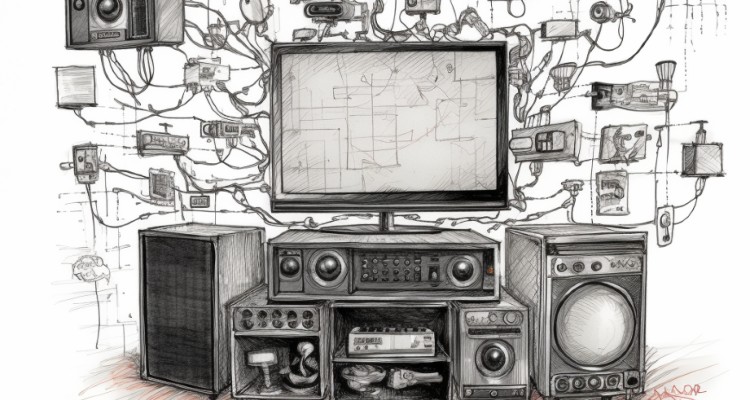

The asynchronous audio and video playback occurs when the presentation of the audio samples in the video player does not align with the corresponding video frames in the stream. A small desync between the audio and video streams can remain undetected if it is in the range of a few frames. However, it can be easily detected and becomes irritating when that distance grows. There are two major types of audio and video desync:

- Constant, where the shift between the audio and video streams remains consistent throughout the entire playback of the stream, and

- Growing, where the desync grows progressively during the playback.

When investigating asynchronous playback in MPEG-DASH streams, we have to perform the following steps:

1/ Determining the type of the desync

- Does the audio stream go ahead of the video, or does the video stream go ahead of the audio?

- Is the amount of the desync the same with every playback attempt?

- What is the amount of the desync – how many video frames or seconds?

- Is the amount of the desync constant throughout the playback, or it grows?

- Is the desync present in all the ABR (Adaptive Bit Rate) layers?

- In the case of the MPEG-DASH stream with DVR – does the desync change after seeking a different stream position?

We have to perform the above-listed measurements on the video player at the very end of the streaming chain.

2/ Testing the video player

3/ Investigating one high-resolution source

The high-resolution video source should have perfect audio and video sync in case of a transcoding scenario. The transcoder usually takes one high-resolution stream and converts it into multiple streams with different resolutions, bit rates, and qualities. In this case, it is pretty simple to determine if the transcoder is causing the audio and video desync. If the source has no audio and video desync while the output experiences that issue, the transcoder should be the module to blame.

4/ Investigating multiple sources with different resolutions and bitrates

The investigation is a little bit more complicated in the case of repackaging multiple input streams for producing ABR MPEG-DASH streams. The packager is responsible for re-multiplexing the pre-encoded streams. It is crucial to have the streams with the different resolutions absolutely aligned in terms of timing. It would be impossible for the repackager to keep the sync between the different streams without that alignment. To better understand why alignment is so important, let’s look at the MPEG-DASH format.

There are many variations of MPEG-DASH streams, but the most common one carries multiple video streams with different resolutions and bitrates and one or several audio streams with different bitrates. The format enables the players to dynamically switch between the various video resolutions, depending on the network capabilities of the receiving device. The same goes for the audio stream, where the switching between the different video streams is entirely independent of the switching of the audio streams. Usually, the audio stream’s bitrate is neglectable in comparison to the video stream’s bitrate. For that reason, most MPEG-DASH streams carry only one audio stream. The fact that the MPEG-DASH format allows independent switching between the different video and audio layers in the stream, forces absolute alignment between each audio and video stream.

We have to investigate a set of parameters in the source streams when confirming compliant and aligned streams to create MPEG-DASH.

- Time-alignment: The timestamp of each video frame should be the same across the different layers. The same goes for the audio, where the same audio frames between the different layers should have the same timestamp.

- Closed GOP: We should encode the video streams in a Closed GOP (Group Of Pictures) manner. And encode the MPEG-DASH stream in multiple segments, where each segment should start with an Intra-coded frame, enabling correct decoding from the start of each segment. That would be possible only if the GOPs in the video streams are closed; thus, no frames from a segment would refer to frames from a previous one.

- Constant GOP length: The positions of the I frames in each of the video streams would mark the start of the individual segments. As the player would perform a quality level switch between the different layers, it is essential to align the segments of the different layers, thus aligning the I frames between the video streams. An easy way to solve this problem is to ensure that all video streams have constant GOP length, and the video encoder mechanism for I frame generation on scene change detection should be disabled.

5/ Simplifying the scenario

- Disable DVR: DVR increases the size of the manifest files tremendously. Disabling the DVR would reduce them but would also eliminate the seeking functionality of the stream.

- Disable DRM: Disabling DRM, if present, would eliminate any DRM-related issues.

- Reduce the number of MPEG-DASH layers: We should use this simplification cautiously, as it is essential which stream we use as the audio source.

Conclusion

The investigation of audio and video synchronization issues in MPEG-DASH streams is quite complicated. The reason is there are multiple sources and destination streams involved in the processing chain. Still, starting from the player and eliminating one module at a time, seems to be a promising approach for such investigations. Progressively simplifying the processing chain can also be applied in parallel, speeding up the investigation process.

Follow the link to learn how Bianor can help you deal with this and other video streaming-related challenges >>>

Video Streaming Lifecycle

Download Bianor’s white paper to learn more about the five most crucial components of video streaming lifecycle.